How State Policy Can Help Teachers Use AI Well

Smart guidance will give teachers the time, trust, and support to make technological leaps that advance learning.

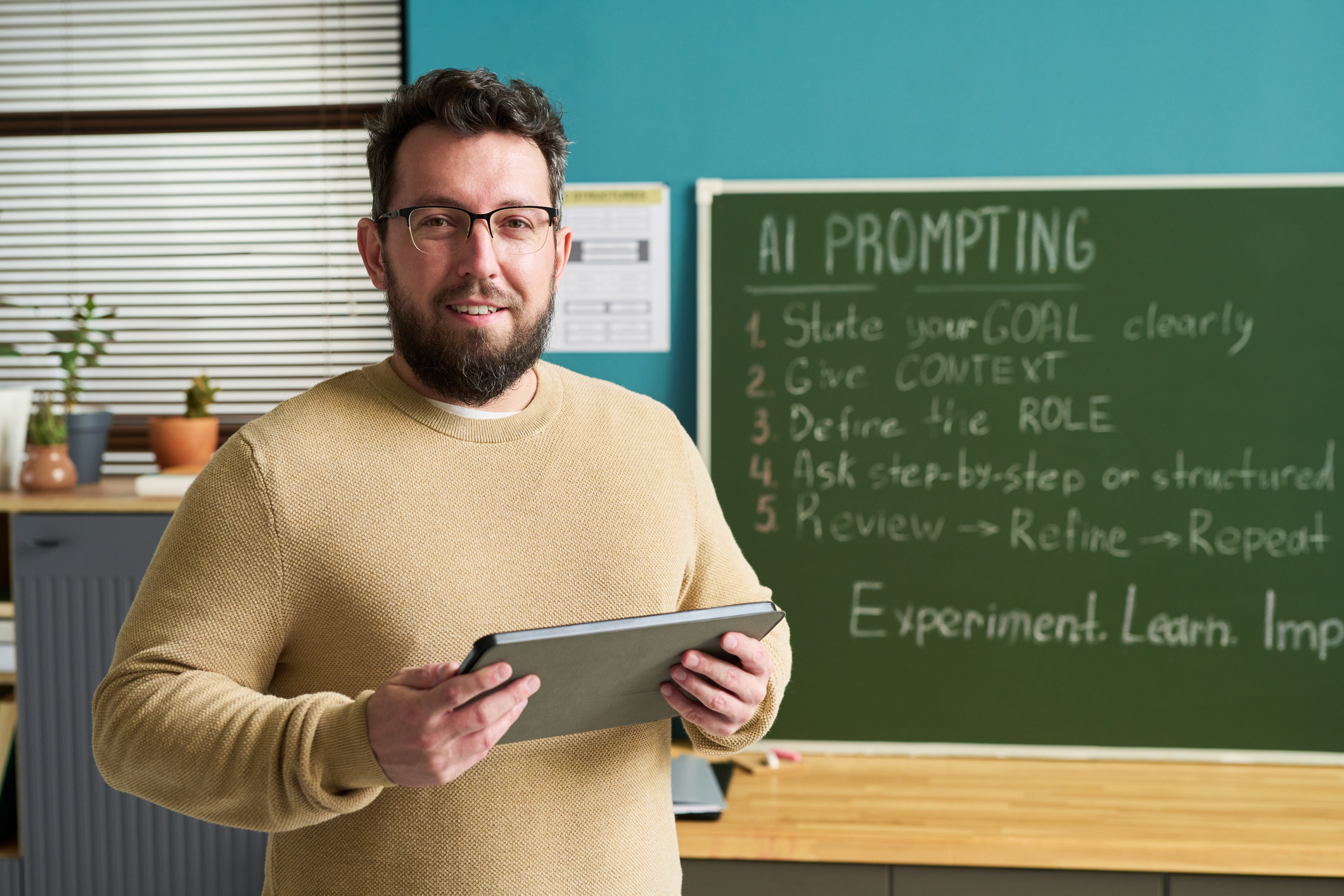

When sixth-grade teacher Sally Hubbard noticed math participation slipping in her Sacramento classroom, she tried everything she knew: small groups, new programs, varied activities. Nothing fully reversed the trend. Through an artificial intelligence (AI) exploration cohort, she began piloting tools that encouraged students to talk through problems, respond to one another, and get immediate feedback. The early days were noisy and messy, but over time, students who once avoided math began asking to keep working, showing greater confidence and stronger test performance.

As Hubbard described it, the shift required the permission of education leadership as much as technology: “Say yes. Allow teachers to play and try things and give them space to get in there and see what it’s all about.”[1] Her experience highlights a clear lesson: AI can meaningfully support student engagement, but only when teachers have the time, trust, and support to experiment.

AI can meaningfully support student engagement, but only when teachers have the time, trust, and support to experiment.

AI is gaining a stronger foothold in classrooms across the country. In 2025, 54 percent of students reported that they used AI for school, a 15 percentage point increase compared with survey results from the past few years.[2] According to one Gallup survey, nearly two-thirds of K-12 teachers used AI during the 2024–25 school year.[3]

AI can rapidly reshape how educators manage their time, how students learn and receive support, and how schools approach long-standing problems. Whether this potential becomes reality depends on what happens next. Prior advances in ed tech made bold promises to personalize learning and close opportunity and achievement gaps, but many failed to deliver.[4] AI is no different. Without clear guidance and support from state policymakers, AI implementation may drift toward misuse or neglect, provide limited value to teachers, and widen existing equity and access gaps.[5] Moreover, without evidence-based guidance, grounded in learning science, on how AI can enhance student learning, its use could lead to cognitive offloading, reduced opportunities to develop critical thinking skills, and less authentic learning engagement.

AI can rapidly reshape how educators manage their time, how students learn and receive support, and how schools approach long-standing problems.

Our organization, the Center on Reinventing Public Education (CRPE), has spent the past few years studying early adopters—that is, the teachers, schools, and districts at the forefront of systemic AI adoption—to understand how they are using AI to advance systemic change. We researched how teachers in California schools are addressing long-standing issues in education using AI tools and how early adopter districts and states nationwide are innovating with AI; conducted surveys of district leaders, educators, and students through the American School District Panel (in partnership with RAND); and convened teachers, district leaders, policymakers, ed tech experts, researchers, and advocates to identify conditions for greater educational coherence in the age of AI.[6]

Our research reveals how teachers and districts are using AI on the ground and how they are balancing risks and rewards. It also highlights opportunities for leaders of state boards of education to create policies that enable teachers to reclaim time, improve clarity, and strengthen connections with students. As Sally Hubbard’s experience illustrates, realizing AI’s promise depends not just on the technology but on the conditions that allow educators to experiment and build mastery. Right now, state boards have a unique opportunity to chart the course for long-term, effective AI use in schools, supporting educators and district leaders as they prepare students for an ever-shifting future.

While states play a critical role in shaping the conditions for effective AI use, they are not the primary implementers. In practice, states can set guardrails, provide clarity, and coordinate resources, while districts and schools lead the day-to-day decisions about how AI is used in classrooms. Early adopter districts consistently report that states are best positioned to support procurement, negotiate pricing, vet tools, define expectations for student AI literacy, and support research and evidence building, as well as to establish clear standards for data privacy and student safety. Beyond these core functions, states can create space for responsible experimentation by enabling pilots and aligning AI efforts with broader workforce or learning goals. As the technology and its implications continue to evolve, this balance will require flexible policies that can be updated over time.

While states play a critical role in shaping the conditions for effective AI use, they are not the primary implementers.

Implementation in Classrooms and Schools

Our research highlights several patterns that characterize the current state of AI adoption in schools. Many teachers and districts prioritize using AI to reduce workload and free up time for more meaningful work. However, this is difficult to achieve alongside uneven access to AI policy guidance, training, tools, and infrastructure, as well as an overwhelming ed tech marketplace that is poorly aligned with what districts need and want. Across the board, educators, administrators, and other education leaders stressed that successful AI implementation must center, not supplant, human relationships.

Many teachers and districts prioritize using AI to reduce workload and free up time for more meaningful work.

Reducing teacher workload and freeing up time. Teachers are increasingly overworked and burned out, routinely working beyond their contracted hours and spending substantial time on grading, lesson planning, and paperwork.[7] In our fall 2025 survey of early adopter school districts, 78 percent reported that the primary goal of their AI-related initiatives was to help teachers manage workload, improve instructional quality, or foster innovation in instruction or assessment. As one district leader said, “From an instructional point of view, we really want to be able to provide teachers with tools that help them in lots of different ways, [with] one of the big ones being freeing up time.”[8]

When used thoughtfully, AI can return meaningful instructional time to teachers. In 2025, teachers who used AI tools weekly for tasks like lesson planning and grading, student feedback, and administrative work reported saving an estimated six hours per week.[9]

Teachers who used AI tools weekly for tasks like lesson planning and grading, student feedback, and administrative work reported saving an estimated six hours per week.

How teachers use that saved time matters as well. In our study on teacher AI pilots in California, educators said that using AI for administrative tasks freed them up to focus more on their students, allowing them to give more personalized feedback and spend more time working with learners in small groups or one-on-one.[10]

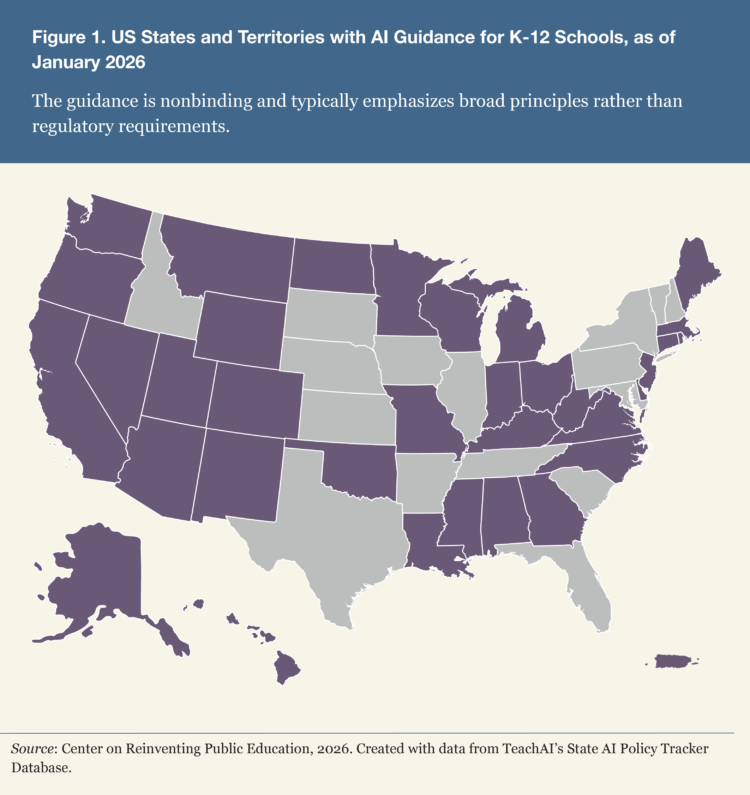

Uneven access to guidance, tools, training, and infrastructure. AI guidance at the state level is decentralized and fragmented. As of December 2025, 35 US states and territories provide official AI guidance for schools (figure 1),[11] but this guidance is nonbinding and typically emphasizes broad principles—such as ethical use, equity concerns, and high-level best practices—rather than specific regulatory requirements for districts.[12]

Meanwhile, districts continue to ask for more support in setting policies and guidance.[13] While about two-thirds of early adopter districts reported having a district AI policy (60 percent) or guidance on staff AI use (67 percent) in fall 2025,[14] less than half of nationally surveyed principals (45 percent) reported that their school or district had provided any AI policy or guidance. In interviews, district leaders said they wanted more explicit guidance from state education agencies, particularly on defining responsible AI use.

District leaders said they wanted more explicit guidance from state education agencies, particularly on defining responsible AI use.

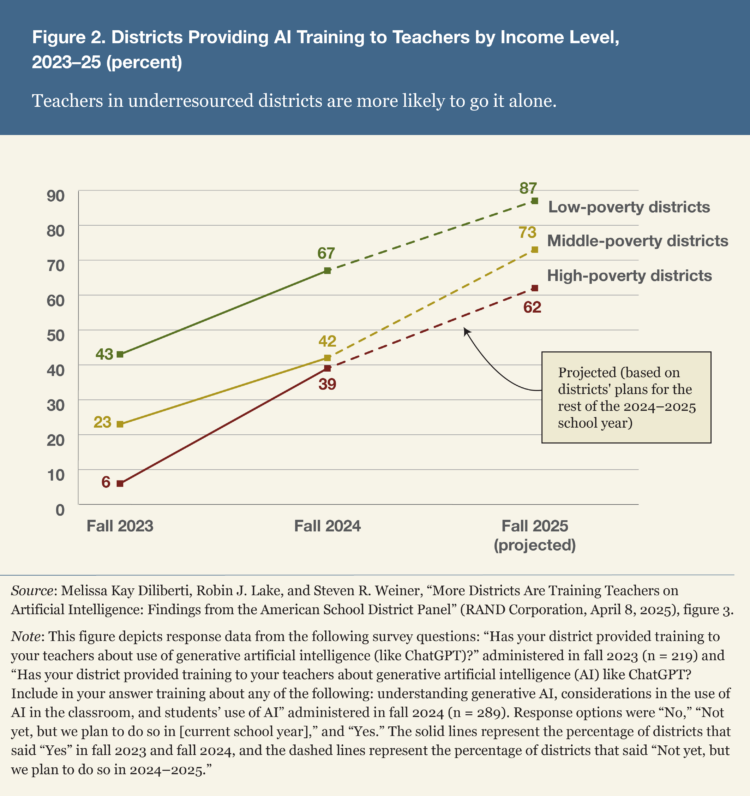

How teachers and students experience implementation and use also varies widely. According to a nationally representative survey from the 2023–24 school year, suburban, majority-White, and low-poverty school districts were about twice as likely to provide AI training for their teachers as urban, rural, or high-poverty districts (figure 2) and more likely to plan training.[15] Teachers in underresourced districts were more likely to go it alone, selecting tools without support.

Unsurprisingly, these equity gaps extend to students as well. In 2025, the gap between high-income and low-income teens who reported using AI for school was 24 percentage points, double the gap observed in 2023.[16]

Rural and underresourced districts may also lack the infrastructure that makes widespread AI use realistic. In our survey, 38 percent of respondents representing 28 states and territories considered technical infrastructure to be a “very” or “extremely significant” barrier to advancing AI integration. One said, “While some districts are racing ahead with policy and strategic planning, others cannot afford to pilot or procure tools.”[17] As federal subsidies for Wi-Fi and broadband access are scaled back, it will fall to states to bridge these gaps. Over half of respondents (58 percent) indicated their state has planned or is planning to aid districts with AI infrastructure and access.

Rural and underresourced districts may also lack the infrastructure that makes widespread AI use realistic.

Help in identifying effective, proven AI solutions. Hundreds of AI-enabled tools are on the market, and companies are constantly launching more. OpenAI, Google, and Anthropic update their products several times a year. This influx of tools and capabilities has overwhelmed many teachers and district administrators. Districts have many options but little evidence of tool effectiveness or alignment with their needs.

Our studies surfaced a clear desire for more guidance on choosing high-quality AI solutions that are effective, feasible to implement, and meet ethical and privacy standards. District leaders said they want “fewer, better” tools, stronger evidence, more trusted vetting sources, and procurement support to ensure student data privacy and fair vendor pricing. Vendors “are really tokenizing our students’ data,” one district leader said. “Access is a huge pain point for us. What does that mean for equity across the system?”[18]

Our studies surfaced a clear desire for more guidance on choosing high-quality AI solutions that are effective, feasible to implement, and meet ethical and privacy standards.

Districts struggle to set clear AI adoption strategies—or any at all. This absence leads teachers to cobble together their own solutions, which can lead to incoherent or misaligned use across classrooms and schools. District leaders report encountering dozens to more than a hundred AI-enabled programs within a single school. In our California study, for example, over half the teachers we interviewed reported that existing tools did not meet their needs, prompting some to combine multiple tools and others to develop their own.

Some districts are experimenting with more tailored approaches. For example, one created a platform that gives teachers access to multiple AI tools at once. Another contracted with an ed tech company to build a custom tool to support their mastery-based learning and career and technical education goals.

In the absence of evidence of effectiveness, some districts are exploring outcomes-based contracts to incentivize vendors to deliver on student results.[19] In 2025, we convened more than 40 developers, educators, advocates, funders, and system leaders to better understand gaps between the AI marketplace and the needs of schools and school systems. They reported few trusted sources of evidence to guide adoption and implementation. As one expert put it, leaders often rely on informal signals—“The district next door is using Product X, so it must be good”—rather than on rigorous research.

In the absence of evidence of effectiveness, some districts are exploring outcomes-based contracts to incentivize vendors to deliver on student results.

Preserving and deepening human connection. Clarifying a vision for AI’s use is critical. Across our research, we heard a consistent call from teachers, district leaders, and experts: AI must foster, not replace, human relationships in schools.

AI must foster, not replace, human relationships in schools.

At our 2025 convening, we asked developers, educators, advocates, funders, and system leaders with AI expertise to sketch a vision of an ideal AI-powered future in schools. “Human-centered” was the most common term used. Many educators in our California study were concerned that AI might degrade the relationships between students and teachers and among students themselves. This concern motivated the pilot teams to design for connection over isolation. “We’re in a mental health crisis in the United States,” said Daniel Whitlock, vice principal at Gilroy Prep, part of Navigator Schools. “Either we keep going down that road … or we look at [AI] from a different perspective, where we use it as a tool to increase our humanity versus taking it away.”[20]

We found that AI can support, rather than alienate, students. In early adopter districts, teachers using AI tools found that they could get to know their multilingual and English-learner students more deeply. Because students worked in their home language, they were more vulnerable and open, helping to bridge language barriers. “Giving our students the opportunity to talk about what they want for themselves [in their home language] … is helping to heal some of that and help them see that we really do see them as partners in their education,” one educator said. “[B]ecause most of us have been here a really long time, we build our own stories about why kids are or are not doing what we want them to do in classes. And then that’s a self-fulfilling prophecy for what is happening in teaching and learning.”[21]

In early adopter districts, teachers using AI tools found that they could get to know their multilingual and English-learner students more deeply.

How States Can Advance AI Strategies

Besides navigating increasingly complex environments and expanding responsibilities, teachers are also expected to implement AI. By articulating a clear vision for the use of AI in teaching and learning, providing timely guidance amid rapid technological change, and creating the conditions for districts to explore new approaches safely and responsibly, state leaders can help their teachers and districts meet this moment. As AI evolves and reshapes K-12, state boards can help districts move toward rewarding, creative, coherent AI use. Offering guardrails rather than mandates, capacity rather than compliance, and permission rather than risk aversion, states can help districts strengthen teaching, advance learning, and promote more equitable outcomes using AI.

As AI evolves and reshapes K-12, state boards can help districts move toward rewarding, creative, coherent AI use.

The following recommendations for state board tasks—and first steps to achieving them—reflect what teachers, districts, state leaders, families, and experts have collectively surfaced as their most urgent needs.

Define a statewide vision for AI. It should align with workforce needs, instructional goals, and existing personalized learning or assessment reforms. If such a vision document has not yet been issued, consider guidance and guardrails that reduce risk while enabling local innovation. As first steps, state boards can create AI workforce- or education-centered task forces, collaborate across agencies to clarify a statewide vision for AI, adopt an AI literacy framework for students and educators, and issue model policies for responsible AI use (box 1).

Box 1. Beginning with a Vision

Ohio announced a statewide vision for AI grounded in workforce improvements and required all districts to establish AI policies by December 2025. InnovateOhio, a statewide board appointed by the secretary of state, launched a toolkit to support this work.[22] The North Carolina Department of Public Instruction describes its AI guidance as a living document and includes an update log that tracks adjustments over time.[23]

Shift toward a “learning organization” model. Such a model will emphasize cross-functional teams, rapid guidance updates, structured opportunities for innovation, and a focus on capacity rather than compliance. As first steps, state boards can create a regulatory sandbox that allows monitored district experimentation without punitive compliance, consider establishing innovation zones that enable responsible exploration and evidence generation for AI tools and strategies, and secure federal and philanthropic funding for statewide AI training, pilot programs, and research (box 2).

Box 2. Learning with Pilots

The Indiana Department of Education hosted a competitive AI-Powered Platform Pilot Grant opportunity to fund AI professional learning opportunities and pilot AI-enabled platforms that reached 2,500 teachers in 116 schools.[24]

Invest in educator capacity. States can do so through professional learning ecosystems, teacher advisory councils, AI learning labs, and AI literacy frameworks. As first steps, state boards can dedicate a state-level position to lead educational AI work; fund statewide or regional teacher professional learning ecosystems, including cohorts; create a teacher advisory council on AI to shape ongoing guidance; launch AI learning labs for teachers to pilot instructional uses and share findings (box 3).

Box 3. Investing in Educators

The Utah Department of Education hosted AI-powered lesson planning workshops and created an online portal for teachers to share their results in 2024–25.[25] Approximately a quarter of teachers in the state participated.

Convene and coordinate stakeholders to share responsibility and ensure coherent implementation and shared learning. These conveners ought to include districts, higher education, researchers, workforce partners, and family advocacy organizations. As first steps, state boards can require teacher preparation programs to incorporate AI literacy and instructional integration and explore how to align state AI standards with workforce and industry needs for digital skills and computing careers (box 4).

Box 4. Convening Stakeholders

California recently convened its Artificial Intelligence Workgroup, comprising teachers, classified staff, students, administrators, higher education leaders, and industry experts. The state is partnering with TeachAI to engage multiple organizations in discussion about empowering educators to teach with and about AI.[26]

Ensure equitable access to tools and infrastructure. To do so, states need to prioritize rural and underresourced districts and leverage local, county, and regional offices for additional support. As first steps, state boards can dedicate regional office staff to support educational AI work, direct training and technical assistance to teachers in underresourced districts or districts with limited digital infrastructure, develop model vendor contract language, and establish vendor vetting and/or outcomes-based contracting processes that can be updated as evidence on effective AI products and procurement evolves.

Box 5. Ensuring Access

Washington State funded its nine educational service districts so they could add ed tech and AI personnel to assist districts with training, troubleshooting, cybersecurity, and external partnerships.[27] The Utah Department of Education vets and negotiates lower pricing on select AI tools for districts.[28]

Much about the future of education and AI’s role in it is unknowable. But our research points to what educators need right now and what states can do—and some are already doing—to support them. As the federal role in education shifts and contracts, responsibility for AI policy increasingly rests with states. To prepare students for a future that AI will inevitably shape, state board leaders must seize this moment to close gaps while mitigating risks.

Bree Dusseault is principal and managing director; Shira Haderlein, principal; Emily Prymula, content director; Chelsea Waite, principal; Melissa Fall, editorial director; Michael Berardino, senior research analyst; and Dana Harrison, senior research analyst, at the Center on Reinventing Public Education.

Notes

[1] Chelsea Waite, Steven Weiner, and Lisa Chu, “What California Teachers Are Trying, Building, and Learning with AI,” report (Center on Reinventing Public Education, July 2025). This study’s subjects were a nonrepresentative group of educators who opted into an AI learning community. Thus our findings are not necessarily generalizable but rather offer insight into how early adopters are using AI, which we hope can contribute to state policymakers’ understanding of what an educator- and student-centered vision for AI might look like.

[2] Christopher Joseph Doss et al., “AI Use in Schools Is Quickly Increasing but Guidance Lags Behind: Findings from the RAND Survey Panels,” research report (RAND Corporation, 2025).

[3] Gallup and the Walton Family Foundation, “Teaching for Tomorrow: Unlocking Six Weeks a Year With AI,” survey research (June 24, 2025). Note that use and benefits were uneven across teachers and districts.

[4] Jory Brass and Tom Liam Lynch, “Personalized Learning: A History of the Present,” Journal of Curriculum Theorizing 35, no. 2 (2020), https://doi.org/10.63997/jct.v35i2.807; Natalia Kucirkova, “EdTech Has Not Lived Up to Its Promises—Here’s How to Turn That Around,” opinion (World Economic Forum, July 13, 2022).

[5] Melissa Kay Diliberti et al., “Using Artificial Intelligence Tools in K-12 Classrooms,” research report (RAND, 2024).

[6] Waite, Weiner, and Chu, “What California Teachers Are Trying”; Bree Dusseault, Maddy Sims, and Michael Berardino, “AI Early Adopter Districts: The Promises and Challenges of Using AI to Transform Education,” report (CRPE, August 2025); Doss et al., “AI Use in Schools Is Quickly Increasing, but Guidance Lags Behind,” 2025; Robin Lake, Bree Dusseault, and Maddy Sims, “Announcing CRPE’s Inaugural Think Forward Fellowship Cohort,” blog (CPRE, July 2025).

[7] Luona Lin, Kim Parker, and Juliana Menasce Horowitz, “How Teachers Manage Their Workload,” report (Pew Research Center, April 4, 2024); TNTP, “Teachers’ Time Use: A Review of the Literature,” executive summary (July 1, 2025); George Zuo, Sy Doan, and Julia H. Kaufman, “How Do Teachers Spend Professional Learning Time, and Does It Connect to Classroom Practice? Findings from the 2022 American Instructional Resources Survey,” research report (RAND Corporation, 2022).

[8] CRPE, “District AI Early Adopter Survey, Fall 2025” (March 2026).

[9] Gallup and the Walton Family Foundation, “Teaching for Tomorrow.”

[10] Waite, Weiner, and Chu, “What California Teachers Are Trying.”

[11] TeachAI, State AI Policy Tracker Database, internal document available upon request, accessed January 2026.

[12] Bree Dusseault, “New State AI Policies Released: Signs Point to Inconsistency and Fragmentation,” brief (CRPE, March 12, 2024).

[13] Doss et al., “AI Use in Schools”; Robin Lake and Lydia Rainey, “We Can’t Blow It: District Leaders Are Optimistic about AI but Urgently Need Help,” brief (CRPE, June 2025).

[14] CRPE, “District AI Early Adopter Survey.”

[15] Lake and Rainey, “We Can’t Blow It.”

[16] Melissa Kay Diliberti, Robin J. Lake, and Steven R. Weiner, “More Districts Are Training Teachers on Artificial Intelligence: Findings from the American School District Panel,” research report (RAND Corp., April 8, 2025).

[17] CRPE, “District AI Early Adopter Survey.”

[18] CRPE, “District AI Early Adopter Survey.”

[19] Raymond C. Pierce, “Closing the Digital Divide with Outcomes-Based Contracting Solutions,” opinion, Forbes, September 8, 2025.

[20] Waite, Weiner, and Chu, “What California Teachers Are Trying.”

[21] CRPE, “District AI Early Adopter Survey.”

[22] Ohio Department of Education and Workforce, “AI in Ohio’s Education,” web page, updated December 30, 2025; InnovateOhio, Ohio’s AI in Education Coalition, AI Strategy, November 20, 2024.

[23] North Carolina Department of Public Instruction, “North Carolina Generative AI Implementation Recommendations and Considerations for PK-13 Public Schools,” AI guidelines, updated November 27, 2024.

[24] Byron Ernest, “A National Center for AI in Education,” Day One Project (Federation of American Scientists, June 25, 2024).

[25] Abby Sourwine, “ASU+GSV 2025: Utah Shares Plan for Statewide AI Education,” GovTech, April 16, 2025; Citizen Portal AI, “State Board Staff Outline $500,000 Teacher AI Training Program, Policy Guidance Work,” April 9, 2025.

[26] California Department of Education, “California Convenes Statewide AI in Education Workgroup for Public Schools,” post (American Association of Colleges of Teachers of Education, Ed Prep Matters, September 10, 2025); California Department of Education, “Guidance for the Safe and Effective Use of Artificial Intelligence in California Public Schools,” web page, reviewed December 31, 2025.

[27] Washington Association of Educational Service Districts, “Educational Technology Network,” web page.

[28] Utah State Board of Education, AI in Education Monthly Report, August 2, 2024; Utah Education Network, “Software,” web page.

Also In this Issue

A State Leader’s Guide to Strategic School Staffing

By John Luczak, Allison Pennington and Sarah BegemanRedesigning teacher roles can solve several problems confronting schools at once.

Half as Likely to Leave: What Team-Based Staffing Means for Teacher Retention

By R. Lennon Audrain and Richard IngersollBy treating retention as a challenge of system and organizational design, state boards can encourage more teachers to stay in the profession.

Achieving Results through School Redesign

By Sharon Kebschull Barrett and Bryan C. HasselFive principles guide staffing design, and state leaders have three tasks.

Supporting and Sustaining Paid Teacher Residencies

By Julie Fitz, Cathy Yun, Victoria Wang, Jennifer Bland, Wesley Wei and Steve WojcikiewiczCalifornia and Texas offer up lessons in how strategic staffing can help.

Bold Bets to Elevate School Leaders

By Megan Bennett and Chelsi ChangState boards can help revolutionize the principalship.

How State Policy Can Help Teachers Use AI Well

By Bree Dusseault, Shira Haderlein, Emily Prymula, Chelsea Waite, Melissa Fall, Michael Berardino and Dana HarrisonSmart guidance will give teachers the time, trust, and support to make technological leaps that advance learning.

i

i

i

i

i

i